Case Study #1: When Virtualizing a Layer Was the Right Call

This is a case study about the moment a standard best practice became the wrong answer. It was one of those situations where following the playbook was actually the problem — and understanding why the playbook exists was what allowed us to solve it.

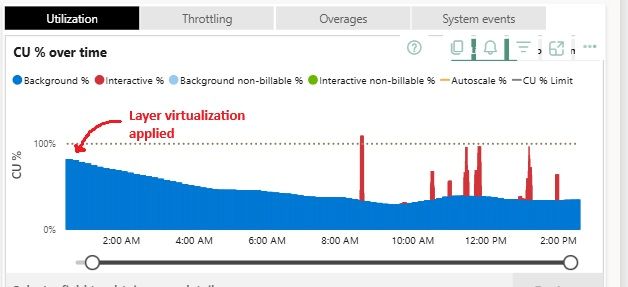

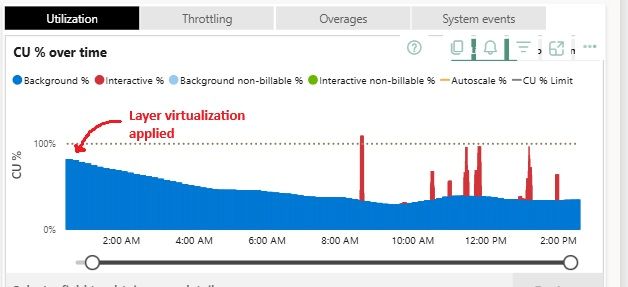

Our platform was under serious pressure. Background processing was consuming around 85% of capacity, and the environment was essentially breaking during peak hours. We needed to find the source and fix it fast.

Finding the offender

We used the Fabric Capacity Metrics app to investigate. It did not take long to identify the culprit: the most critical pipeline in the environment — processing data from an ERP system covering finance, sales, supply, e-commerce, and product and customer data — was responsible for nearly half of the total capacity consumption alone. This was the heart of the team’s data platform, running overnight and feeding everything downstream.

What we found in the architecture

When we dug into the pipeline, we found something that was easy to overlook: the silver layer had very few transformations. Specifically, type casting and column renaming. That was it.

And it was being read only a handful of times a day.

In other words, we were paying a heavy processing cost to maintain a full physical materialization of a layer that barely changed the data and was rarely accessed. It existed because that is how you build it. Medallion architecture, bronze, silver, gold — by the book.

But understanding why the silver layer exists in the first place gave us the clarity to question it. Its purpose is to provide a clean, conformed, reusable intermediate layer. When the transformations are minimal and the reads are rare, physical materialization stops being a benefit and starts being a cost.

The solution

We virtualized the silver layer — replacing the physical tables with SQL views built on the SQL endpoint of the silver lakehouse.

This worked cleanly in our specific context. All tables were being read a few times a day at most. The complex logic sat in the gold layer, which was already built as views and did the real heavy lifting. If any layer needed to remain physical, it was gold — not silver. Silver, with its minimal transformations, had nothing to gain from being materialized.

The swap had no downstream impact. Consumers of the silver layer continued working exactly as before.

Results

- Background processing: 85% → 35% of capacity

- Overnight pipeline processing time: 5h40 → 2h

- Zero downstream disruption

What this taught me

Rules exist to teach you fundamentals. Fundamentals exist so you can break them intelligently.

Following standard practices is how you build the maturity to know when they do not apply. In this case, the silver layer existed because that is what the architecture calls for — not because it was solving a real problem. Recognizing that distinction, and being confident enough to act on it, was what made the difference.