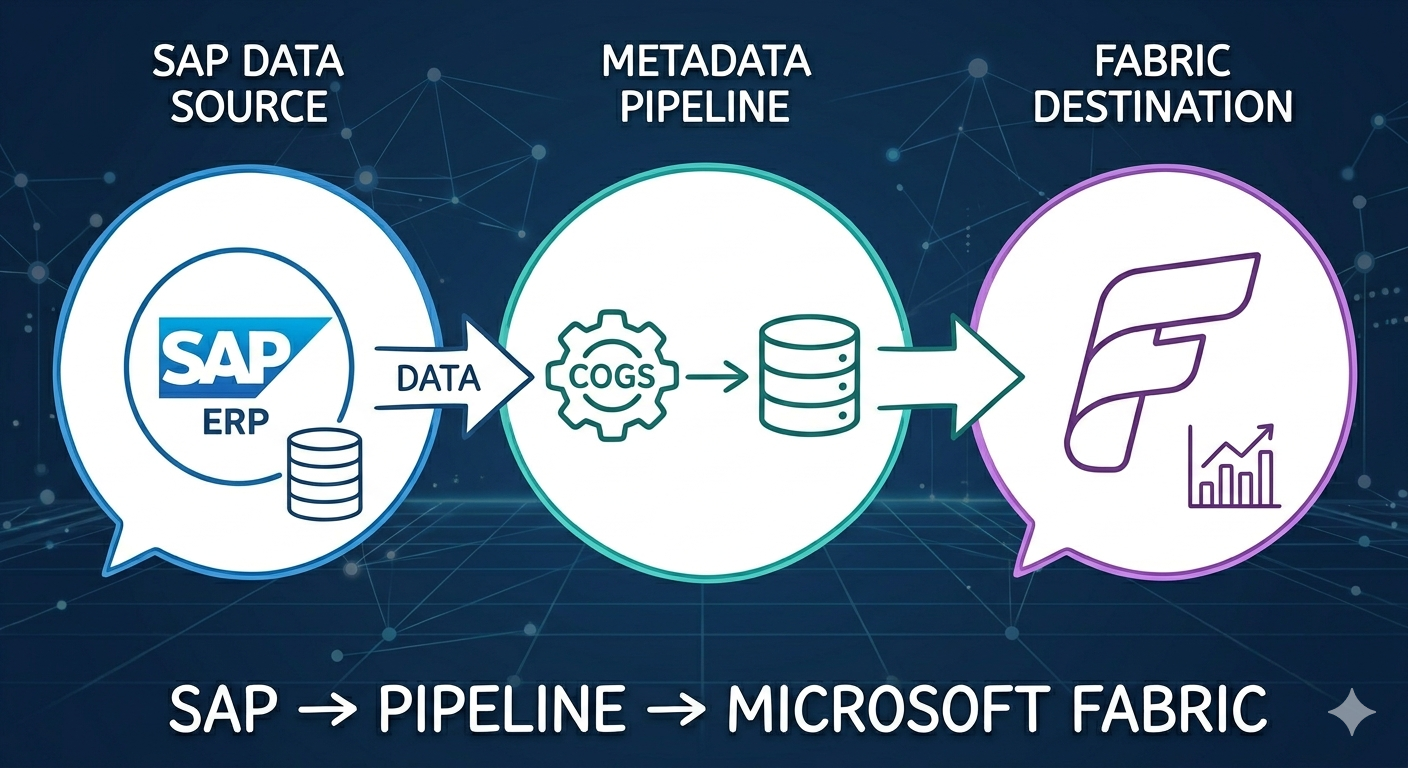

Case Study #3: Building a Metadata-Driven Ingestion Pipeline — 85 Tables, One Framework

This is a case study about a problem I genuinely enjoyed solving. There is something satisfying about designing a system that removes itself from the equation — where adding new work stops requiring more engineering effort. That is what this was about.

I was the only data engineer responsible for ingesting data from a large ERP system. The system contained dozens of tables relevant to the business — financial records, transactional data, master data — each with its own structure, update frequency, and ingestion requirements.

Building individual pipelines for each table was not an option. Every new table would mean a new pipeline to develop, test, and maintain. For a single engineer, that is unsustainable. For the business, it means slow delivery and growing technical debt.

The answer was a metadata-driven pipeline.

The design

The core of the framework was a metadata table — a configuration layer describing each dataset the pipeline needed to ingest. Each row represented one table and contained:

- Table name

- Primary key(s)

- Schema definition — column names and expected types

- Load type: full or incremental

- For incremental tables: a timestamp watermark for change detection

- An action column: INSERT, UPDATE, or DELETE per row

The pipeline read this table at runtime and adapted its behavior accordingly. Full-load tables were truncated and reloaded. Incremental tables used the watermark to extract only new or changed records, then applied upsert logic — including hard deletes for rows flagged as removed.

Adding a new table to the ingestion scope meant updating one row in the metadata table. From configuration to first successful load: under 5 minutes.

The hard parts

Everything arrived as strings

The source system exported all data as string type. Every column — dates, integers, decimals, booleans — arrived as text. The pipeline was responsible for casting each field to its correct type based on the schema defined in the metadata table.

Decimal handling was particularly complex

Negative numbers arrived in a non-standard format: 45,78- instead of -45.78. The transformation had to replace the comma with a decimal point and move the negative sign from right to left.

The harder problem was that some columns were not purely numeric. The same column that held decimal values in most rows could hold a string code in others. Blindly casting those columns would break the pipeline. The schema definition had to encode not just the target type, but whether the cast should be applied — per column, per table — allowing the framework to handle each field correctly without table-specific logic.

Results

- 85 tables ingested daily, some multiple times per day

- New table onboarding: under 5 minutes

- One engineer maintaining the full ingestion layer without pipeline sprawl

What this taught me

Metadata-driven design is a force multiplier. The upfront investment in a solid framework pays back every time a new table needs to be added — and in a large ERP context, that happens constantly.

The framework itself is not the hard part. The hard part is handling the messiness of the source data in a generic way. Getting the schema definition layer right — precise enough to encode exceptions, flexible enough to cover new cases — is where most of the real engineering lives.