Case Study #4: Data Quality Beyond Null Checks — Building a Proactive Monitor for Production Pipelines

I want to share a case study that I think about often when the topic of data quality comes up. Not because the technical implementation was particularly complex, but because of what it changed operationally — and the moment it paid for itself in a way that was hard to ignore.

We were running weekly ingestion pipelines for critical market data used in official reports and daily business decisions. Trust in the data was a real problem.

Duplicate records were appearing. Source files sometimes arrived structurally valid but incomplete — no schema violations, no broken formats, just missing data. And the way we found out was always the same: a business analyst flagging the issue hours after it had already been delivered.

The goal was to build something that caught problems before data reached end users.

The design

A framework, not a script

I built the monitor as an OOP Python/PySpark framework. The design goal was that adding a new test should require writing only a SQL query — not modifying pipeline code or understanding the framework internals. A developer who knew the data could write a new test in minutes.

Tests ran inside the pipeline, triggered after each transformation layer completed. Results were written to a log table in the data lake, keeping a full history of test runs, failures, and affected records.

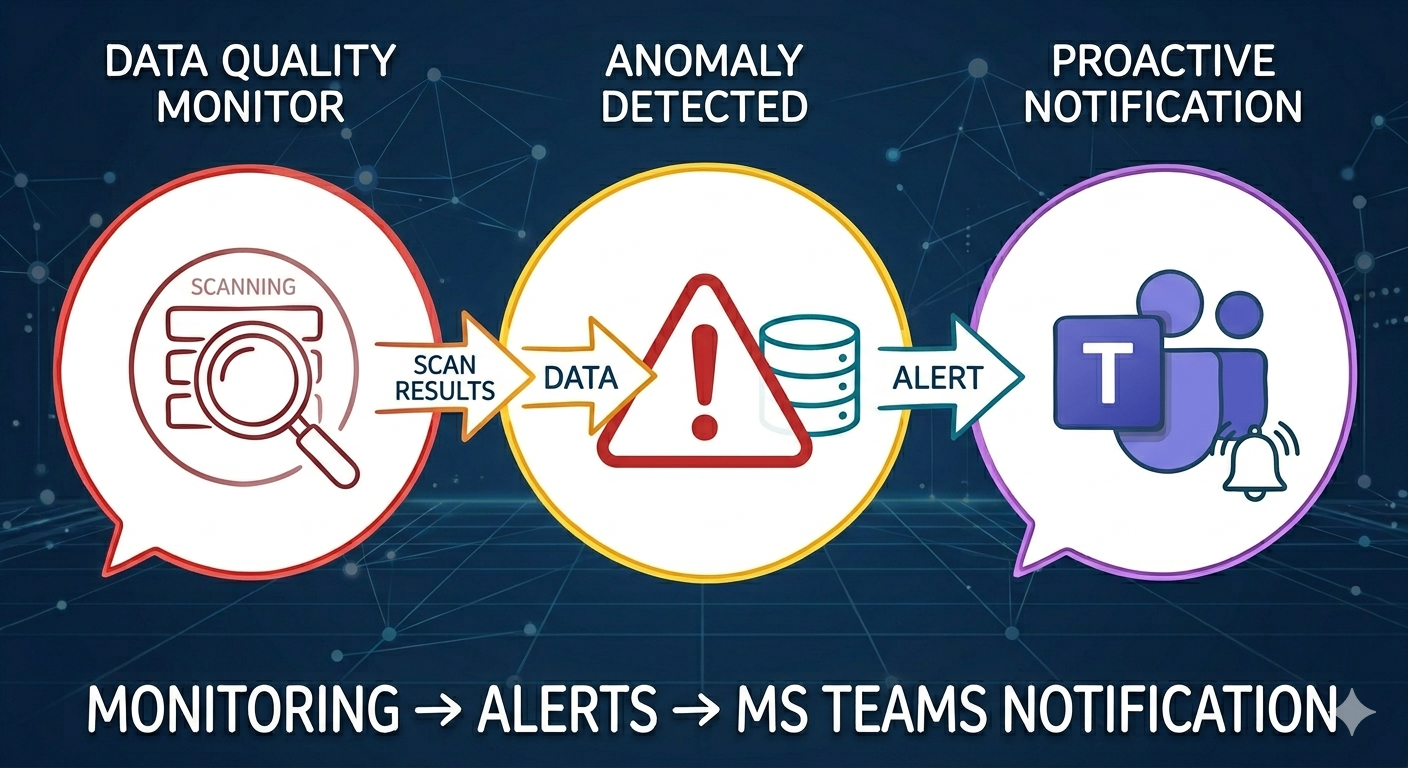

Proactive alerting via webhook

Instead of checking a log table every morning, the framework sent results to a messaging channel via webhook the moment a layer finished executing. The team received a message immediately: which tests passed, which failed, and what the failures contained.

No manual checking. No discovering problems when someone calls.

Business logic testing

The most valuable tests were not the obvious ones. Null checks and row counts catch structural problems. Business logic tests catch domain problems — things the schema cannot validate.

One example: certain stores from specific regions were not permitted to sell a particular product category. When those transactions appeared in the data, they were not real sales — they were being accounted for elsewhere, and including them would lead to double-counting in reports. We built a test that identified and quarantined those records before they reached the reporting layer.

This type of test requires knowing the data domain. It cannot be generated automatically.

The moment it proved its value

The clearest demonstration came from missing geographic data. Sales data for a given week was supposed to cover the full territory. One week, data for a specific region was simply absent from the source file. Not corrupted, not malformed — structurally valid. Just missing.

Before this system, that gap would have been discovered by an analyst mid-morning.

With the monitor in place, the absence was detected during ingestion. Before business teams started their day, the team had already identified exactly what was missing, contacted the data provider with a precise description of the gap, and started working on the resolution.

The business received an explanation before they had even noticed the problem.

What this taught me

Data quality infrastructure is not about catching every possible error. It is about catching your errors — the ones that matter in your domain, at your cadence, before they reach the people who depend on the data.

Null checks are the floor, not the ceiling. The tests that actually protect your pipeline are the ones that encode your business knowledge, not just your data engineering knowledge.